Storage Assessment Foundry – SAF Analyze

Exercise Summary

0 of 1 Questions completed

Questions:

Information

You have already completed the exercise before. Hence you can not start it again.

Exercise is loading…

You must sign in or sign up to start the exercise.

You must first complete the following:

Results

Results

0 of 1 Questions answered correctly

Time has elapsed

You have reached 0 of 0 point(s), (0)

Earned Point(s): 0 of 0, (0)

0 Essay(s) Pending (Possible Point(s): 0)

Categories

- Not categorized 0%

- 1

- Current

- Review

- Answered

- Correct

- Incorrect

-

Question 1 of 1

1. Question

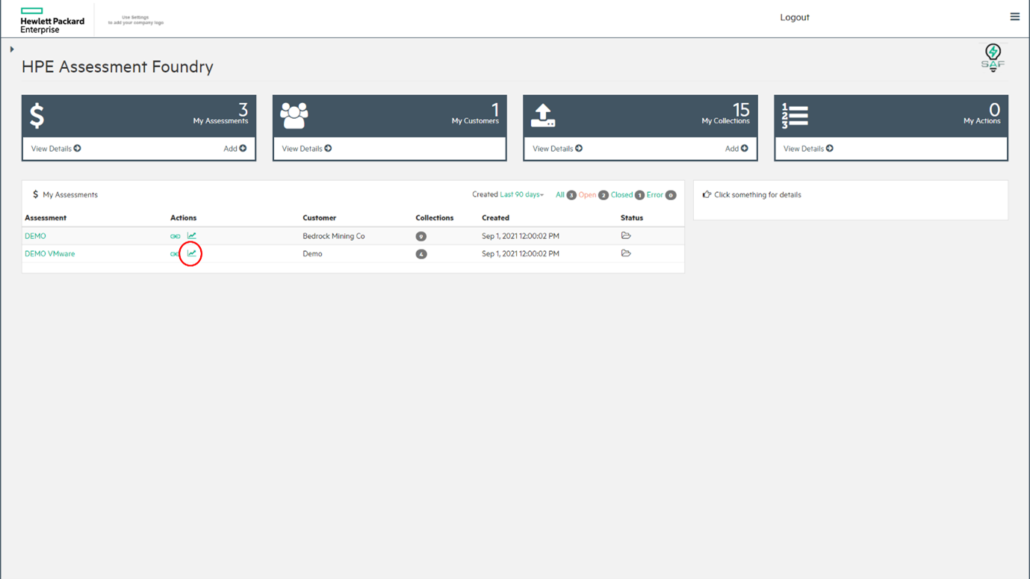

Navigate to the HPE Assessment Foundry

Ensure that you are viewing the main dashboard:

Click on the Analyse icon next to the DEMO VMware project.

Click on the VMware tab.

Explore the analysis dashboard. The questions below can be used as a guide to help you find out more. Note that there are some notes at the end of this section to help you understand some of the metrics that are displayed.

How many guest virtual machines are there in total?

What is the physical to virtual CPU ratio?

How is the available storage capacity?

What information is not supplied about virtual machines which are powered off?

Are all the ESX hosts running the same operating system version?

Explore the tabs which provide more detail.

Which ESX host has the longest reported response time for write operations?

Which is the most commonly deployed operating system on the virtual machines?

What are the estimated costs to migrate this infrastructure to Amazon Web Services?

Export the report to a MS Word document and MS Excel spreadsheet.

Open the files and review how the details are presented.

How useful are these reports for customer discussions?

Notes

Description of report contents

Input/Output operations per second (IOPS) is a measurement used to characterize storage devices, such as hard-disk drives (HDDs). The top row has the numbers that can be used as the base numbers (as in absolute minimum required numbers) when sizing the solution in NinjaSTARS. However, it is more likely that certain growth in capacity and IOPS must be taken into consideration. In the report, there is a section named “Call to Action,” where the Solution Architect can add projected growth and other remarks.

The top row is divided into three groups: IO/s (IOPS), response times, and capacity. To describe the characteristics of a workload, you must specify at least three metrics: IOPS, response time, and IO transfer size. For example, an array may be able to handle 150 K IOPS, but without the response time, it is not clear if that is good or bad. Combining the three metrics defines a specification that can be used in the design process. Combining this information results in a baseline for the given workload that can be used in the solution sizing process.

IOPS

This section describes the IOPS (and MiB/s throughput as a fourth metric) for the workload and is divided into IO/s, MB/s (throughput), and block size. These numbers are displayed for a selectable percentile. By default, the selected percentile is 95th (P95). The P95 is commonly seen when sizing, as the most representative for a workload. However, if a different percentile is preferred, use the drop-down menu to change it.

IO/s represents the number of IOPS for the workload, where MB/s represents the throughput in mebibytes per second of the workload, and the last number is the IO transfer size (or block size) for the workload. The IO transfer size can be displayed in two ways: as an estimated value or as the distributed value. When collecting performance data from arrays, not all arrays supply the histogram for the IO transfer size. When this is the case, SAF creates a simulated histogram, based on the average transfer size given by the array. So each sample in time has the average IO transfer size of that sample (instead of a real histogram where each sample in time has the numbers for each of the IO transfer size buckets).

The value displayed is the weighted average of the IO transfer size, shown as “Estimated Average” IO transfer size in kibibytes. When the array does supply the histogram data for the IO transfer size, the value displayed is the “most common” IO transfer size in kibibytes. The SAF Analyse module auto detects the availability of the true or simulated histogram, and adapts accordingly. When a simulated histogram is used, it is shown as “Est.,” which is short for “Estimated.

Response times

The middle section shows the response times for reads and writes and the read/write ratio. This section describes the analyzed response time, one of the three metrics required to define the workload specifications. The response time is divided into read and write response times, as they can be quite different. Write response times are normally faster than reads, as writes are, under normal conditions, stored in DRAM cache and written to disk asynchronously. Reads are, when not cached (“cache-miss”), come directly from disk, either HDDs or solid-state disks (SSDs), which have lower performance than DRAM cache. The write ratio is therefore significant as writes create a higher load at the backend due to

RAID protection generating additional backend IOPS. When analyzing the workload, the write ratio is an important factor when sizing arrays. These numbers are for host IOs (not backend IOs), so they represent the “true” workload. These numbers are displayed for a selectable percentile, by P95.

Capacity

The third section shows the capacity in gibibytes or tebibytes for the workload. Some vendors use terms differently, so SAF “translates” the terms the vendor uses to the equivalent for HPE Storage arrays. This section includes the IO transfer size typical characteristics (which is simulated when the array does not have the histogram values). Using a distributed model, when looking at the IO transfer size measurements, it reveals concentrations of values that may be separated from one another. Instead, using the overall average block size, which is a single value, when sizing a workload would not reflect reality. For instance, the average block size could be 48.7 KiB; however, when looking at the distributed model, the majority of the IOs can be 8 KiB, with some 256 KiB used for sequential reads (backups) and

none of the IOs having a transfer size close to the average of 48.7 KiB. Sizing based on 48.7 KiB would result in a configuration that is not fine-tuned for the real workload.

Detailed analysis

The lower half of the analysis pane shows the “Collections” overview with graphs displaying just the six “core” values for each collection. Sometimes some more detailed insight into the performance may be required (for instance, if the core values are different from what was to be expected, see if there is an atypical pattern in IO load and so forth). To view these, click the “Details” tab, and select one of the “mini” graphs.

To zoom in on the data, drag the graph to the desired time window. Remember this data is not intended to be used to troubleshoot performance issues, as the sampling time is not fine-grained enough to do so. The performance data collected is intended to get more insights on the workload, where the workload report can be used to more accurately design HPE Storage arrays.

-

This response will be reviewed and graded after submission.

Grading can be reviewed and adjusted.Grading can be reviewed and adjusted. -